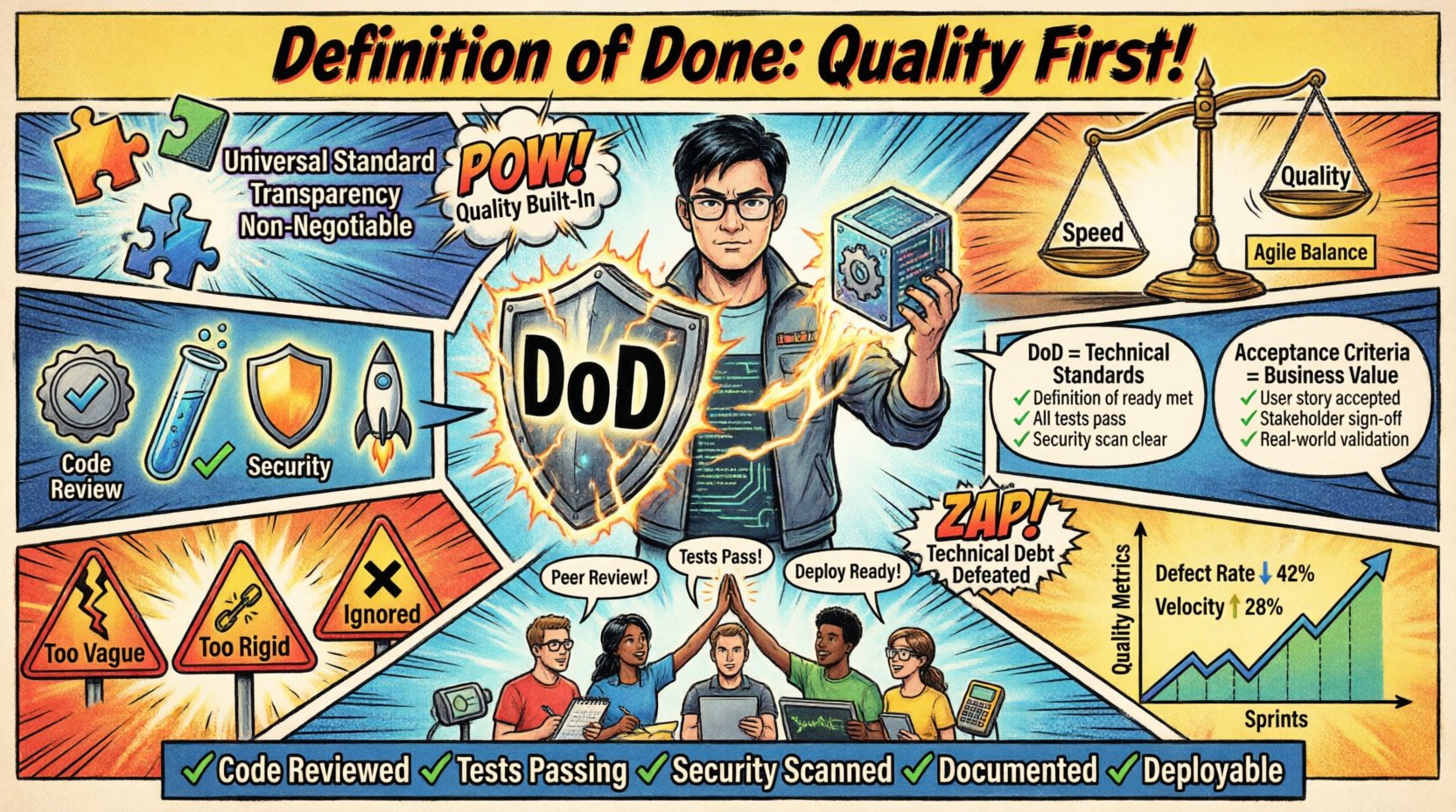

In the landscape of Agile development, few concepts carry as much weight as the Definition of Done. It serves as the agreement between the Development Team and the stakeholders regarding what constitutes a completed piece of work. However, achieving a robust Definition of Done goes beyond a simple checklist. It is a commitment to quality that permeates every sprint and every increment.

When teams neglect this artifact, technical debt accumulates silently. Features may appear functional on the surface, but they lack the stability required for long-term success. This guide provides a comprehensive framework for establishing, maintaining, and leveraging a Definition of Done that prioritizes quality above speed. By aligning your team around clear standards, you create a foundation for predictable delivery and sustainable pace.

1. Understanding the Definition of Done 🧩

The Definition of Done is a formal description of the state of the Increment when it meets the quality measures required for the product. It is not merely a list of tasks; it is a contract. If a Product Backlog Item does not meet the Definition of Done, it cannot be released, even if the functionality is present.

Universal Standard: It applies to every single Product Backlog Item.

Transparency: It must be visible and accessible to all stakeholders.

Non-Negotiable: It cannot be compromised for the sake of velocity.

Without a clear Definition of Done, the concept of an Increment becomes ambiguous. One team might consider code written as done, while another expects integration testing. This misalignment creates friction and reduces trust. A robust Definition of Done eliminates ambiguity by setting a high bar for completion.

2. Why Quality Must Be the Core Focus ⚖️

Quality is not an afterthought; it is a prerequisite for value. When a team rushes to finish work without adhering to quality standards, they often introduce defects that require significant effort to fix later. The cost of fixing a bug increases exponentially the further it moves through the development lifecycle.

Focusing on quality within the Definition of Done offers several tangible benefits:

Reduced Technical Debt: Standards prevent shortcuts that lead to future refactoring.

Increased Velocity: Teams move faster when they do not have to stop and fix broken builds.

Stakeholder Confidence: Consistent quality builds trust with the organization and customers.

Maintainability: Well-documented and tested code is easier to modify and extend.

By embedding quality checks directly into the Definition of Done, the team shifts from a mindset of inspection to a mindset of prevention. This proactive approach ensures that quality is built into the product, not tested into it at the end of the process.

3. Essential Components of a Strong DoD 🔍

A Definition of Done is rarely generic. It must be tailored to the specific context of the project, the technology stack, and the organizational constraints. However, certain elements are fundamental to ensuring robust quality across any Agile environment.

Code Quality Standards

Code must meet specific standards to ensure readability and maintainability. This includes adherence to coding conventions, naming standards, and architectural patterns agreed upon by the team.

Static Analysis: All code must pass automated static analysis checks with no critical issues.

Code Reviews: Every change must be reviewed by at least one peer to ensure knowledge sharing and error detection.

Documentation: Public APIs and complex logic must be documented for future reference.

Testing Requirements

Testing is the most critical pillar of quality. Relying on manual testing alone is insufficient for modern software delivery. Automation ensures repeatability and speed.

Unit Tests: Core logic must be covered by automated unit tests with a defined coverage threshold.

Integration Tests: Interfaces between components must be verified to ensure data flows correctly.

Regression Testing: Existing functionality must be validated to prevent new changes from breaking old features.

Performance Benchmarks: The system must meet defined performance criteria under load.

Security and Compliance

Security cannot be bolted on at the end. It must be integrated into the Definition of Done to protect the organization and its users.

Vulnerability Scans: Dependencies must be scanned for known security vulnerabilities.

Data Privacy: Sensitive data handling must comply with relevant regulations and policies.

Access Controls: Authentication and authorization mechanisms must be verified.

Deployment and Operations

A feature is not done until it can be deployed and operated in the target environment.

Deployment Scripts: Automated scripts must be available to deploy the code.

Monitoring: Logging and alerting must be configured for the new feature.

Environment Parity: The code must run successfully in the production-like environment.

4. The Process of Creating Your Team DoD 📝

Defining the Definition of Done is a collaborative effort. It cannot be imposed by management from the outside. The Development Team owns the Definition of Done, but they should consult with stakeholders to understand external constraints.

Review Current State: Assess what is currently considered done. Identify gaps where quality is lacking.

Gather Requirements: Collect input from operations, security, and compliance teams.

Draft the Standard: Create a preliminary list of criteria that addresses the identified gaps.

Validate with Stakeholders: Ensure the criteria are achievable and understood by the business.

Implement and Iterate: Start using the Definition of Done. Review it regularly during Sprint Retrospectives to adjust as needed.

This process ensures buy-in from the team. When developers feel ownership over the standards, they are more likely to adhere to them consistently.

5. Definition of Done vs. Acceptance Criteria 🆚

It is common to confuse the Definition of Done with Acceptance Criteria. While both define quality, they serve different purposes.

Aspect | Definition of Done (DoD) | Acceptance Criteria |

|---|---|---|

Scope | Applies to the entire Increment. | Applies to a specific User Story. |

Consistency | Remains relatively static over time. | Varies per item based on functionality. |

Focus | Technical and Quality Standards. | Functional Behavior and Business Value. |

Example | Code reviewed, tests passed. | System accepts input between 1-100. |

Understanding this distinction prevents scope creep. Acceptance Criteria might change for every story, but the Definition of Done should remain stable to maintain quality baselines.

6. Common Pitfalls in Defining Completion 🚫

Teams often stumble when creating or maintaining their Definition of Done. Recognizing these pitfalls early can save significant time and effort.

Too Vague: Phrases like “Code is clean” are subjective. Use measurable terms like “Linting passes with zero errors”.

Too Rigid: Standards should evolve. If the technology stack changes, the Definition of Done must change with it.

Too Complex: If the Definition of Done takes weeks to complete, it blocks delivery. Balance thoroughness with efficiency.

Ignored by the Team: If the team does not respect the Definition of Done, it becomes meaningless. Leadership must support enforcement.

Ignoring Non-Functional Needs: Focusing only on features while ignoring performance, security, or usability leads to fragile products.

7. Maintaining and Evolving the Standard 🔄

The Definition of Done is not a one-time task. It is a living document that requires continuous improvement. As the team matures and technologies evolve, the standards must adapt.

During Sprint Retrospectives, dedicate time to discuss the Definition of Done. Ask the following questions:

Did we encounter any quality issues this sprint?

Were there any tasks that took longer than expected due to quality checks?

Is there a new technology or standard we should incorporate?

Are we consistently able to meet the current criteria?

Adding new criteria is easier than removing them. As the team gains confidence, they can introduce stricter standards. Removing criteria should only happen if a process proves ineffective or redundant.

8. A Practical Checklist for Quality 📋

To assist in implementation, consider the following checklist as a baseline. This list is not exhaustive but covers the essential areas required for a robust quality assurance process.

✅ All code reviewed and approved by peers.

✅ Unit tests written and passing.

✅ Integration tests executed successfully.

✅ Static code analysis completed with no critical findings.

✅ Documentation updated for new features.

✅ Security scan performed on dependencies.

✅ Deployed to staging environment.

✅ Performance tested against baseline metrics.

✅ User acceptance testing passed.

✅ No known defects logged in the tracker.

✅ Rollback plan documented.

✅ Monitoring and alerting configured.

Teams should customize this list to fit their specific needs. Some may require accessibility testing, while others may focus more on database integrity.

9. Integrating DoD with Continuous Improvement 📈

Quality is a journey, not a destination. The Definition of Done acts as the compass for this journey. By consistently applying these standards, the team creates a culture of excellence.

When a team consistently meets a high Definition of Done, the organization begins to trust the output. This trust allows for faster decision-making and reduced oversight. The team can focus on innovation rather than fixing broken processes.

Furthermore, a robust Definition of Done supports the principle of Technical Excellence. It ensures that the software architecture remains clean and adaptable. This is crucial for long-term agility. If the codebase becomes brittle, the ability to respond to change diminishes.

Leadership plays a vital role here. They must protect the Definition of Done from pressure to cut corners. When deadlines loom, the temptation to skip tests or documentation is high. Standing firm on quality standards demonstrates a commitment to long-term value over short-term gains.

10. Measuring Success and Impact 🎯

How do you know if your Definition of Done is working? You need metrics that reflect quality and flow.

Defect Rate: Track the number of bugs reported in production after release. A decreasing trend indicates improving quality.

Lead Time: Measure how long it takes from code completion to production. A stable or decreasing lead time suggests efficient processes.

Build Success Rate: Monitor the percentage of builds that pass all automated tests without manual intervention.

Team Satisfaction: Survey the team regularly. Do they feel the Definition of Done is helping or hindering them?

These metrics provide data-driven insights. They help the team understand if they are maintaining the right balance between speed and quality.

11. The Human Element of Quality 👥

While tools and checklists are essential, the human element remains central. Quality is a shared responsibility. Every member of the Development Team must feel empowered to stop the line if quality is compromised.

Psychological safety is required for this to work. Team members must feel safe to admit mistakes without fear of retribution. When a defect is found, the focus should be on fixing the process, not blaming the person. This culture of continuous improvement ensures that the Definition of Done remains relevant and effective.

Training and education also play a part. If team members lack the skills to implement certain quality standards, the Definition of Done will fail. Invest in upskilling the team to meet the evolving standards.

12. Final Thoughts on Sustainable Quality 🌱

Building a product is not just about writing code. It is about building a system that delivers value reliably. The Definition of Done is the mechanism that ensures this reliability.

By rigorously defining what Done means, you eliminate ambiguity and set a high bar for the team. This leads to a stable product, a healthy team, and satisfied stakeholders. Remember that quality is not a phase; it is an ongoing practice.

Start small if necessary, but start now. Identify one area where quality is lacking and add a criterion to the Definition of Done. Review it in the next retrospective. Over time, these small changes compound into a robust quality assurance framework.

Commit to the standard. Respect the process. And watch as your team’s output becomes a benchmark for excellence.