Component modeling serves as the backbone of software architecture documentation. It provides a visual representation of the structural organization of a system, defining how distinct parts interact to deliver functionality. As technology landscapes shift rapidly, the methods used to model these components are undergoing significant transformation. Architects and engineers must stay informed about emerging patterns to maintain system integrity and adaptability.

This guide explores the trajectory of component modeling. We examine how automation, artificial intelligence, and distributed systems are reshaping the way we design and document software structures. Understanding these shifts allows teams to build systems that are resilient, scalable, and easier to maintain over time.

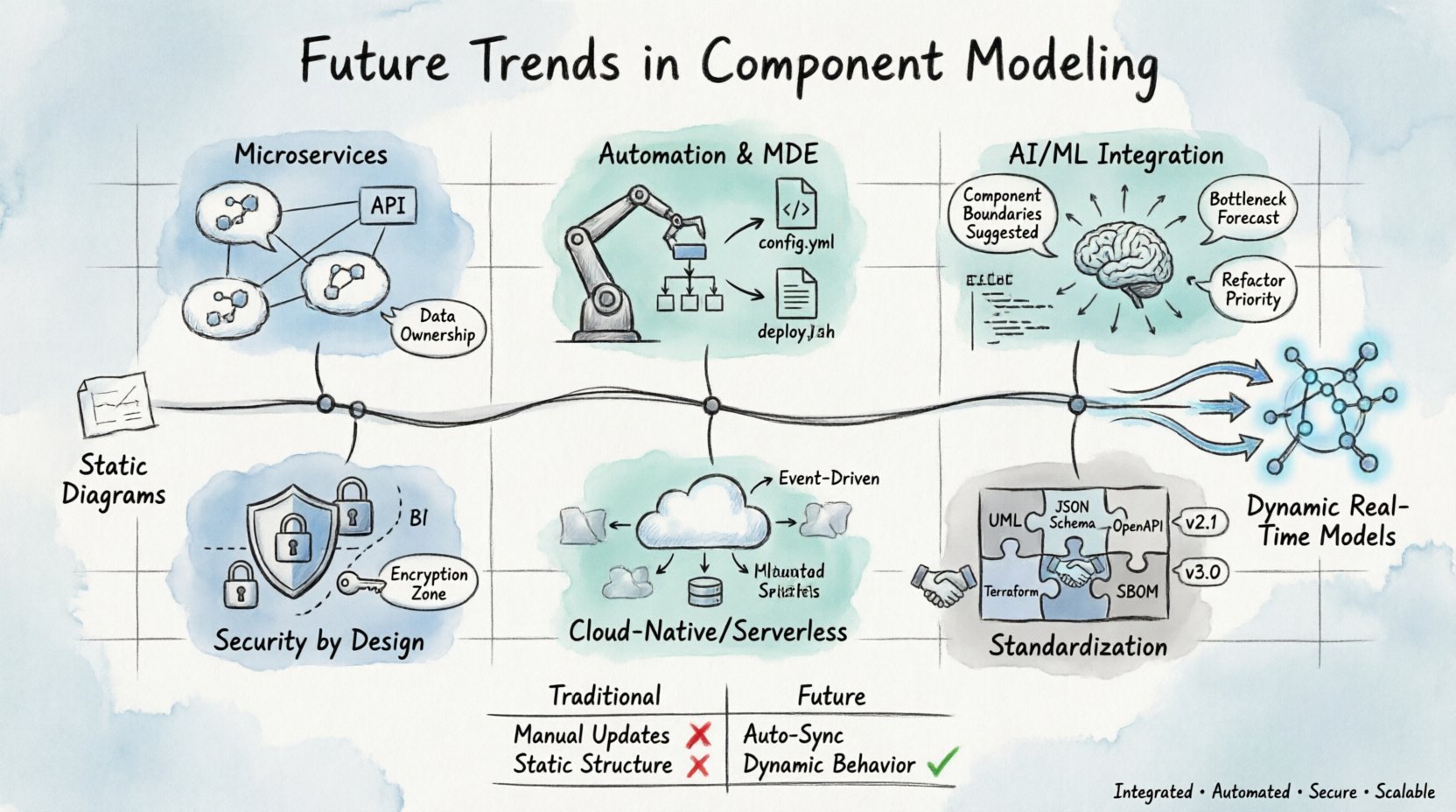

The Evolution of Static Diagrams ⏳

Traditionally, component diagrams were static snapshots. They depicted the state of a system at a specific moment in time. Architects created these visuals to communicate high-level design decisions to stakeholders. While effective for initial planning, static models often became outdated quickly as the codebase evolved.

The disconnect between documentation and implementation created technical debt. Teams spent excessive time updating diagrams to match the reality of the code. This maintenance burden often led to documentation being ignored entirely. Modern trends address this by integrating modeling directly into the development lifecycle.

- Dynamic Visualization: Models now reflect real-time system states rather than theoretical designs.

- Version Control Integration: Diagram versions are tracked alongside source code commits.

- Live Data Binding: Model elements pull data from running environments to ensure accuracy.

By moving away from static documentation, teams reduce the friction between design and execution. The goal is to maintain a single source of truth that remains accurate without manual intervention.

Microservices and Distributed Boundaries 🌐

The shift toward microservices architecture has fundamentally changed component boundaries. In monolithic systems, components were often loosely coupled modules within a single process. In distributed systems, components represent independent services that communicate over networks.

Modeling these boundaries requires a deeper understanding of network latency, fault tolerance, and data consistency. The visual representation of a component must now include information about its deployment environment, communication protocols, and security constraints.

Key considerations for modeling distributed components include:

- Service Contracts: Defining clear interfaces between services to prevent tight coupling.

- Data Ownership: Identifying which component owns specific data sets to avoid duplication.

- Failure Modes: Visualizing how components behave when dependencies fail.

Architects must model the infrastructure layer as part of the component structure. This includes load balancers, message queues, and API gateways. Treating infrastructure as a first-class citizen in modeling ensures that scalability and resilience are designed into the system from the start.

Automation and Model-Driven Engineering 🤖

Manual modeling is prone to human error and inconsistency. Model-Driven Engineering (MDE) automates the creation of artifacts from high-level models. This approach reduces the risk of discrepancies between the design and the actual implementation.

Automation enables the generation of boilerplate code, configuration files, and deployment scripts directly from component models. This streamlines the development process and allows engineers to focus on business logic rather than repetitive setup tasks.

Benefits of automation in modeling include:

- Consistency: Automated processes apply the same rules across all generated artifacts.

- Speed: Code generation happens instantly, accelerating iteration cycles.

- Validation: Models can be validated against architectural rules before any code is written.

As tools improve, the line between modeling and coding blurs. Engineers may find themselves designing systems in a visual environment that compiles directly into production-ready infrastructure. This reduces the cognitive load required to switch between design tools and coding environments.

AI and Machine Learning Integration 🧠

Artificial Intelligence is beginning to influence how component models are created and maintained. Machine learning algorithms can analyze existing codebases to suggest optimal component structures. They identify patterns in how data flows through a system and recommend boundaries that minimize coupling.

AI-driven modeling tools can also predict potential bottlenecks. By analyzing historical performance data, the system suggests where to add caching layers or increase redundancy. This proactive approach helps architects address performance issues before they impact users.

Potential applications of AI in modeling include:

- Automated Refactoring: Suggesting component splits or merges based on complexity metrics.

- Dependency Analysis: Visualizing hidden dependencies that are not immediately obvious in the code.

- Compliance Checking: Automatically flagging components that violate security or regulatory standards.

While AI does not replace human judgment, it provides valuable insights that guide architectural decisions. The role of the architect shifts from drawing diagrams to validating and refining the recommendations made by intelligent systems.

Security and Compliance by Design 🔒

Security is no longer an afterthought added at the end of development. It must be embedded into the component model itself. Regulatory requirements and security best practices need to be represented as structural constraints within the diagram.

Future modeling standards will likely require explicit definitions of trust boundaries. Each component must declare its data handling policies and access controls. This visibility allows security teams to audit the architecture without needing to review every line of code.

Key security modeling elements include:

- Authentication Flows: Visualizing how identity is verified across component boundaries.

- Encryption Zones: Marking areas where data must be encrypted in transit or at rest.

- Privilege Escalation Paths: Mapping how access rights move between components.

Integrating security into the model ensures that compliance is maintained throughout the system lifecycle. It simplifies the audit process and reduces the risk of vulnerabilities slipping through the cracks during development.

Cloud-Native and Serverless Considerations ☁️

The rise of cloud-native technologies has introduced new constraints for component modeling. Serverless architectures, in particular, challenge traditional views of component boundaries. In serverless environments, components are often ephemeral functions that scale automatically.

Modeling these systems requires a focus on statelessness and event-driven interactions. The diagram must represent the flow of events rather than the persistence of state. This shift impacts how teams visualize data storage and message passing.

Considerations for cloud-native modeling include:

- State Management: Defining how external state is stored when components themselves are stateless.

- Scaling Policies: Indicating how components respond to changes in load.

- Managed Services: Representing third-party services as black-box components.

Architects must understand the limitations of the cloud provider. Modeling tools need to abstract these limitations while remaining accurate enough to guide implementation. This balance ensures that the system is portable without sacrificing performance.

Standardization and Interoperability 📏

As systems become more complex, the need for standardized modeling languages grows. Interoperability between different tools and platforms ensures that models can be shared across teams and organizations. This is critical for large enterprises with diverse technology stacks.

Open standards prevent vendor lock-in and allow teams to switch tools without losing their architectural documentation. Industry bodies are working on formats that support both visual representation and machine-readable data.

Key aspects of standardization include:

- Common Data Formats: Using open formats for exchanging model data.

- API Integration: Defining how tools can communicate with each other.

- Versioning Schemes: Ensuring backward compatibility in model formats.

Adopting standards facilitates collaboration between development, operations, and security teams. It ensures that everyone is working from the same architectural definition, reducing misunderstandings and errors.

Comparison of Traditional vs. Future Approaches

| Feature | Traditional Modeling | Future Modeling Trends |

|---|---|---|

| Update Frequency | Manual, periodic updates | Continuous, automated sync |

| Accuracy | Low, prone to drift | High, real-time validation |

| Tooling | Standalone diagram editors | Integrated IDE plugins |

| Focus | Static structure | Dynamic behavior & state |

| Security | Added post-design | Built into the model |

Key Trends and Their Impact

| Trend | Impact on Architecture |

|---|---|

| AI-Assisted Design | Reduces cognitive load, improves pattern recognition |

| Microservices | Increases complexity, requires stronger boundaries |

| Cloud-Native | Demands stateless design, event-driven flows |

| Automation | Speeds up delivery, reduces human error |

| Security Integration | Ensures compliance, reduces vulnerability surface |

Standardization and Interoperability 📏

As systems become more complex, the need for standardized modeling languages grows. Interoperability between different tools and platforms ensures that models can be shared across teams and organizations. This is critical for large enterprises with diverse technology stacks.

Open standards prevent vendor lock-in and allow teams to switch tools without losing their architectural documentation. Industry bodies are working on formats that support both visual representation and machine-readable data.

Key aspects of standardization include:

- Common Data Formats: Using open formats for exchanging model data.

- API Integration: Defining how tools can communicate with each other.

- Versioning Schemes: Ensuring backward compatibility in model formats.

Adopting standards facilitates collaboration between development, operations, and security teams. It ensures that everyone is working from the same architectural definition, reducing misunderstandings and errors.

Looking Ahead 🔮

The future of component modeling is dynamic and deeply integrated with the development process. It is moving away from being a separate documentation activity to becoming a core part of the engineering workflow. This shift empowers teams to build systems that are more robust and easier to evolve.

Staying current with these trends requires a commitment to continuous learning. Teams should evaluate their current modeling practices and identify areas where automation or standardization can add value. By embracing these changes, organizations can improve their ability to deliver high-quality software in a rapidly changing environment.

The journey toward advanced modeling is incremental. It involves refining processes, adopting new tools, and fostering a culture of accuracy. As technology continues to evolve, the principles of clear, maintainable architecture will remain constant. The tools will change, but the need for a shared understanding of system design will endure.